Augmented analytics and Big data processing

Development and support of Data Lakes, setting up systems for statistical analysis of collected data

- Organization of high-speed loading of large data arrays (terabytes)

- Creation of big data warehouses based on Exasol, Sqream, Greenplum, Hadoop

- Creation of data marts and calculation modules on top of Data Lake

- Configuring data warehouse lifecycle management

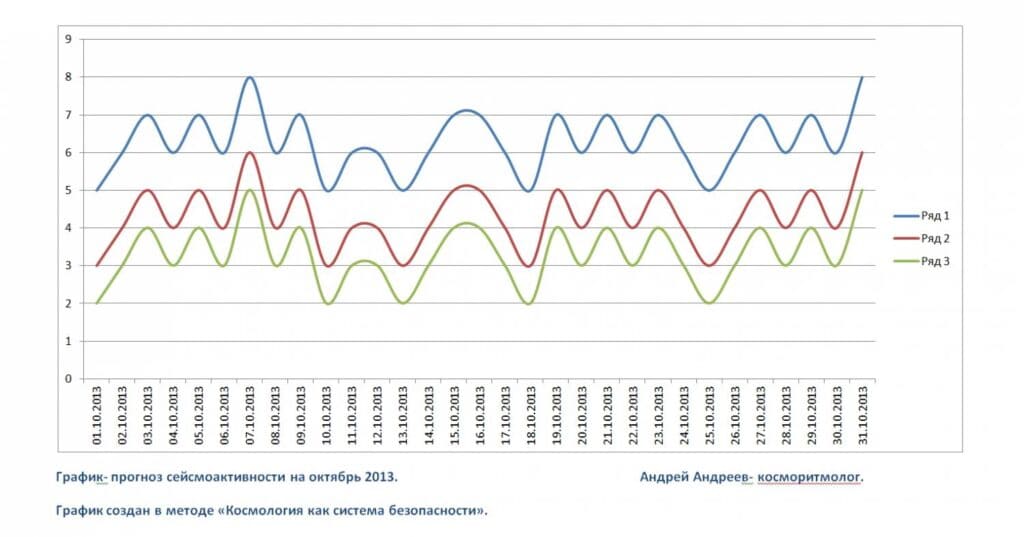

- Development of calculation models for statistical modeling and forecasting based on Pyton, R libraries, integration of the calculation process with ETL processes

- Customizing Models in Commercial Statistical Analysis and Forecasting Products

- Embedding created models in web applications and cloud services

- Support for Data Lake and statistical data analysis models